Publications

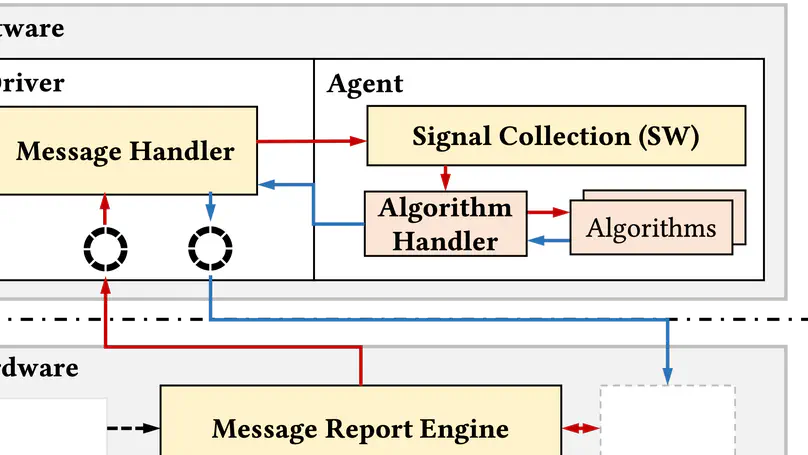

Congestion control (CC) is crucial for datacenter networks (DCNs), and CC frameworks are proposed to enable users to easily deploy new algorithms tailored to diverse scenarios. The framework is desired to be high-performance and generic: (i) allows CC to achieve high throughput and low latency. (ii) supports various algorithms and congestion scenarios. However, prior works either suffer from performance limitations or lack sufficient generality. CCP experiences throughput degradation under heavy traffic, while DOCA-PCC improves performance using hardware but lacks support for detecting and mitigating host congestion. In this paper, we present Taurus, a high-performance and generic CC framework through hardware-software co-design. To this end, Taurus partitions CC functions into distinct tasks and maps them onto suitable hardware/software components while mitigating excessive interaction overhead. Specifically, Taurus designs a collaborative signal collection mechanism to support diverse congestion feedback, a type-aware message report engine to reduce communication overhead, and software built-in handlers to facilitate deployments. We have implemented a fully functional Taurus on commodity servers with FPGA-based NICs. Experimental results show that Taurus supports various CC algorithms in achieving their near-native performance. Compared to CCP, Taurus improves throughput by 32.3%, reduces latency by 96.4%, and lowers CPU overhead by 158.7%. Compared to DOCA-PCC, Taurus improves throughput by 9.3% and reduces latency by 28.8%.

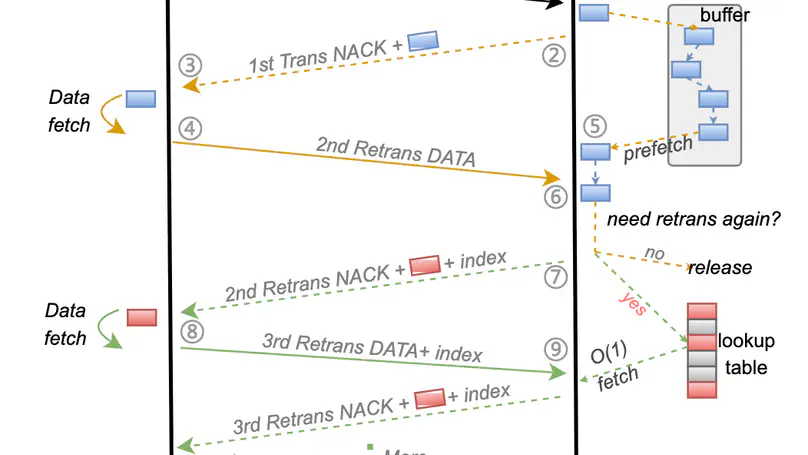

Modern cloud service providers and AI innovators are expanding their services across data centers to harness scale-out benefits while minimizing wide-area network (WAN) communication overhead. Although Remote Direct Memory Access (RDMA) has become the de-facto standard for high-speed data center networks, extending its benefits to WAN faces fundamental challenges: high bandwidth-delay products (BDP), frequent packet loss, and the inherent tension between performance and resource efficiency in existing approaches. To address these fundamental limitations and empower cross-datacenter services, we propose OmniDMA, a novel PFC-free RDMA architecture designed for loss-prone WAN environments. Our design introduces two core innovations: 1) The Adamap data structure enables flexible loss recording through context compression and management, and 2) a three-tier control path architecture that decouples retransmission from primary transmission control path, achieving high performance at WAN scale with minimal RNIC SRAM consumption. This design further reduces context management and scheduling overhead to guarantee line-rate processing under packet loss. Preliminary results demonstrate that OmniDMA achieves efficient RDMA communication over lossy WANs with constant resource consumption.

Industrial large-scale recommendation systems mostly follow a two-stage paradigm: retrieval and ranking stages. The retrieval stage aims to select thousands of relevant candidates from a vast corpus with millions or more items, and thus often becomes the performance bottleneck. Offloading the retrieval stage to hardware is a promising solution. Nevertheless, previous solutions either fail to achieve optimal performance or lack the sufficient generality to support fuzzy search, which has been widely used in modern retrieval systems to improve their scalability and efficiency. In this paper, we present SNARY, a generic SmartNICaccelerated retrieval system, to facilitate both exact and fuzzy search. Specifically, SNARY utilizes High-Bandwidth Memory (HBM) for corpus storing and scanning and designs two types of search engines: a data parallelism exact search, and a Locality-Sensitive Hashing (LSH)-based fuzzy search. Furthermore, SNARY employs a pipeline-based approach to select Top-K items and streams the data flow of the whole system. We have implemented SNARY on Xilinx commercial SmartNICs. Experimental results show SNARY achieves a 20.91%83.88% lower latency and a 1.26×-18.27× higher latencybounded throughput in exact search scenarios, and achieves a 85.13%-87.40%lower latency and a 20.18×-23.81× higher latency-bounded throughput in fuzzy search scenarios in comparison with the state-of-the-art hardware-based solutions..

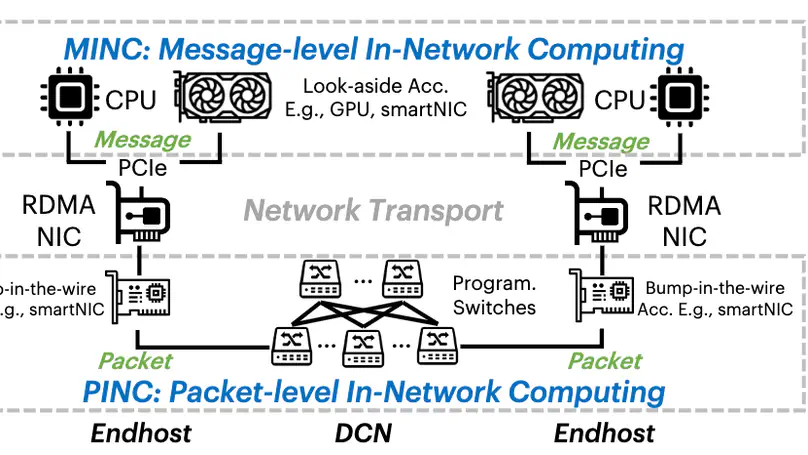

Message-level in-network computing (MINC) emerges as a promising hardware acceleration method that utilizes accelerators to offload message-level computation and enhance application performance in the datacenter. However, the development of MINC applications is challenging in the communication aspect due to poor portability and under-utilized resource. In this paper, we present LEO, a generic and efficient communication framework for MINC. LEO facilitates portability across both application and hardware by introducing a communication path abstraction, which is capable of describing generic applications with predictable communication performance across diverse hardware. It further incorporates a built-in multi-path communication over CPU and accelerator to enhance communication efficiency. We have implemented a prototype of LEO and evaluated it with four case studies on testbeds covering FPGA-based, SoC-based smartNICs, and GPU. Experiments show that LEO achieves genericity and efficiency across MINC applications, yielding 1.2–4.7× speedup over baselines with negligible overhead.

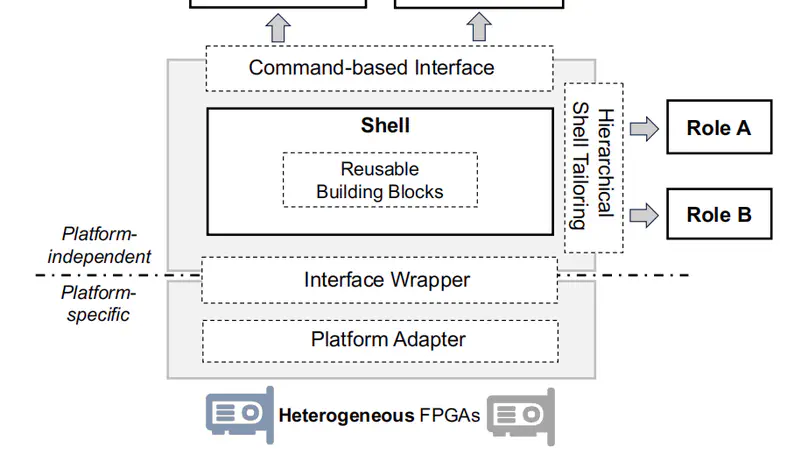

FPGAs are gaining popularity in the cloud as accelerators for various applications. To make FPGAs more accessible for users and streamline system management, cloud providers have widely adopted the shell-role architecture on their homogeneous FPGA servers. However, the increasing heterogeneity of cloud FPGAs poses new challenges for this architecture. Previous studies either focus on homogeneous FPGAs or only partially address the portability issues for roles, while still requiring laborious shell development for providers and ad-hoc software modifications for users. This paper presents Harmonia, a unified framework for heterogeneous FPGA acceleration in the cloud. Harmonia operates on two layers: a platform-specific layer that abstracts hardware differences and a platform-independent layer that provides a unified shell for diverse roles and host software. In detail, Harmonia provides automated platform adapters and lightweight interface wrappers to manage hardware differences. Next, it builds a modularized shell composed of Reusable Building Blocks and employs hierarchical tailoring to provide a resource-efficient and easy-to-use shell for different roles. Finally, it presents a command-based interface to minimize software modifications across distinct platforms. Harmonia has been deployed in a large service provider, Douyin, for several years. It reduces shell development workloads by 69%-93% and simplifies role and software configurations with negligible overhead (<0.63%). Compared with other frameworks, Harmonia supports cross-vendor FPGAs, reduces resource consumption by 3.5%-14.9% and simplifies software configurations by 15-23x while maintaining comparable performance.

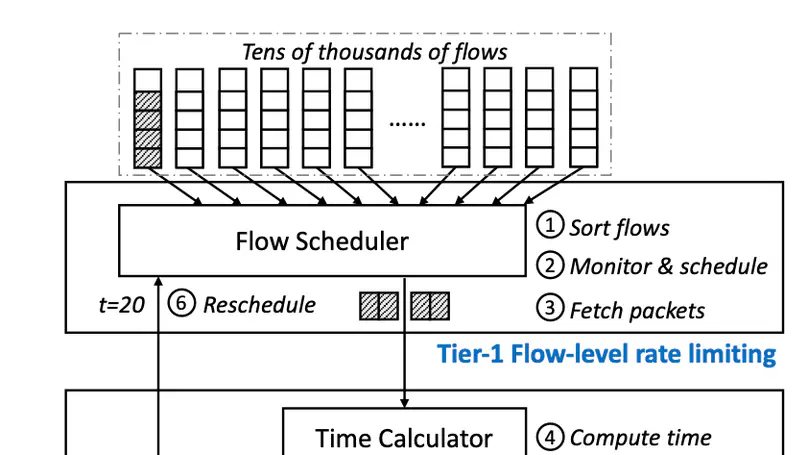

RDMA NICs desire a rate limiter that is accurate, scalable, and fast: to precisely enforce the policies such as congestion control and traffic isolation, to support a large number of flows, and to sustain high packet rates. Prior works such as SENIC and PIEO can achieve accuracy and scalability, but they are not fast enough, thus fail to fulfill the performance requirement of RNICs, due primarily to their monolithic de- sign and one-packet-per-sorting transmission. We present Tassel, a hierarchical rate limiter for RDMA NICs that can deliver high packet rates by enabling multiple-packet-per- sorting transmission, while preserving accuracy and scala- bility. At its heart, Tassel renovates the workflow of the rate limiter hierarchically: by first applying scalable rate limit- ing to the flows to be scheduled, followed by accurate rate limiting to the packets to be transmitted, while leveraging adaptive batching and packet filtering to improve the perfor- mance of these two steps. We integrate Tassel into the RNIC architecture by replacing the original QP scheduler module and implement the prototype of Tassel using FPGA. Exper- imental results show that Tassel delivers 125 Mpps packet rate, outperforming SENIC and PIEO by 3.6×, while sup- porting 16 K flows with low resource usage, 7.5% - 25.6% as compared to SENIC and PIEO, and preserving high accuracy, precisely enforcing rate limits from 100 Kbps to 100 Gbps.

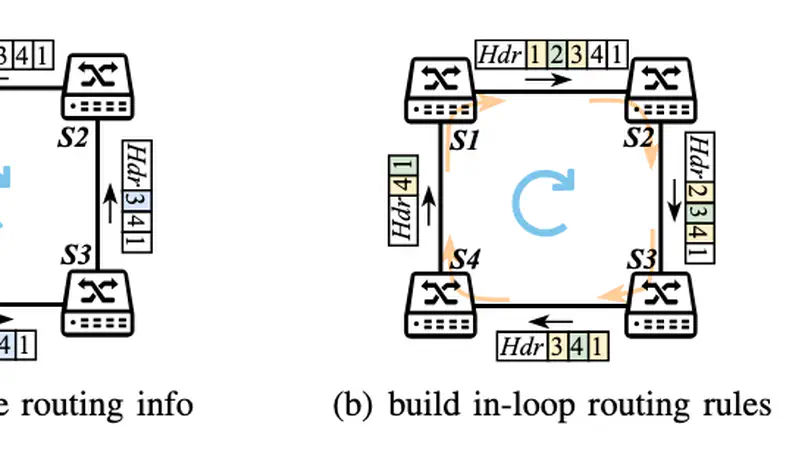

RDMA over Converged Ethernet (RoCEv2) employs Priority-based Flow Control (PFC) for a lossless fabric to maintain high performance. However, PFC can cause deadlocks, which pause traffic and potentially lead to severe exceptions for applications. Existing solutions solve deadlocks at a considerable cost, resulting in degradation of end-to-end network performance. We present Roundabout, a data plane scheme designed to detect and resolve deadlocks with minimal side effects. We first analyze how switches in different states contribute to deadlocks. Based on the analysis, we design an election-based distributed detection scheme that efficiently and robustly identifies deadlocks. By exploiting buffer configuration redundancy, we develop an in-network collaborative packet scheduling scheme that forwards deadlocked packets to their destinations in a lossless manner, facilitating natural deadlock resolution. Additionally, we implement a barrier mechanism to ensure in-order packet delivery to the receiver. Both analysis and experiments demonstrate that Roundabout effectively detects and resolves deadlocks while minimizing side effects to the network, making it an ideal enhancement for PFC switches.

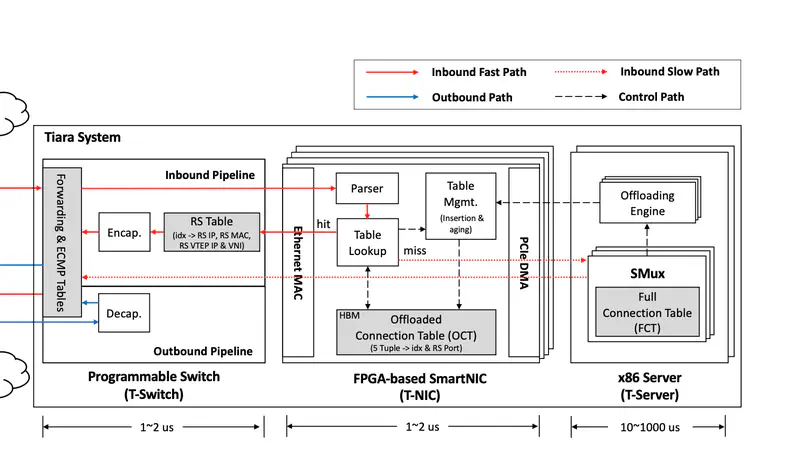

Stateful layer-4 load balancers (LB) are deployed at datacenter boundaries to distribute Internet traffic to backend real servers. To steer terabits per second traffic, traditional software LBs scale out with many expensive servers. Recent switch-accelerated LBs scale up efficiently, but fail to offload a massive number of concurrent flows into limited on-chip SRAMs. This paper presents Tiara, a hardware architecture for stateful layer-4 LBs that aims to support a high traffic rate (> 1 Tbps), a large number of concurrent flows (> 10M), and many new connections per second (> 1M), without any assumption on traffic patterns. The three-tier architecture of Tiara makes the best use of heterogeneous hardware for stateful LBs, including a programmable switch and FPGAs for the fast path and x86 servers for the slow path. The core idea of Tiara is to divide the LB fast path into a memory-intensive task (real server selection) and a throughput-intensive task (packet encap/decap), and map them into the most suitable hardware, respectively (i.e., map real server selection into FPGA with large high-bandwidth memory (HBM) and packet encap/decap into a high-throughput programmable switch). We have implemented a fully functional Tiara prototype, and experiments show that Tiara can achieve extremely high performance (1.6 Tbps throughput, 80M concurrent flows, 1.8M new connections per second, and less than 4 us latency in the fast path) in a holistic server equipped with 8 FPGA cards, with high cost, energy, and space efficiency.